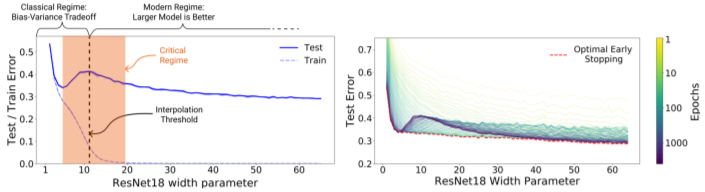

https://arxiv.org/abs/1912.02292 Deep Double Descent: Where Bigger Models and More Data Hurt We show that a variety of modern deep learning tasks exhibit a "double-descent" phenomenon where, as we increase model size, performance first gets worse and then gets better. Moreover, we show that double descent occurs not just as a function of model siz arxiv.org 이 논문은 하버드 대학과 OpenAI에서 작성한 논문입니다. 기존에는..